2017 Edition

Welcome to the Winter 2017 Sagesse Publication!

1. Introduction – Sagesse Winter 2017 (384 KB)

by Uta Fox, CRM, ARMA Canada, Director of Canadian Content

2. Memory as a Records Management System (471 KB)

by Sandra Dunkin, MLIS, CRM, IGP and Cheri Rauser, MLIS

3. RM in Canadian Universities – The Present & Future (329 KB)

by Shan Jin, MLIS, CRM, CIP

4. Electronic Recordkeeping – From Promise to Fulfillment (370 KB)

by Bruce Miller, IGP, MBA

5. D’archivage électronique – De la promesse à l’accomplissement (439 KB)

par Bruce Miller, IGP, MBA

6. From Chaos to Order – A Case Study In Restructuring a Shared Drive (957 KB)

by Anne Rathbone, CRM and Kris Boutilier

Meet the Authors

Sandra Dunkin, MLIS, IGP, CRM, is the Records & Information Management Coordinator for the First Nations Summit Society. Sandra is also currently the Program Director for the ARMA Canada Conference, Chair of ARMA Vancouver’s First Nations RIM Symposium Committee, and a member of ARMA International’s Core Competencies Update Group.

Currently working as an academic librarian in online distance education Cheri Rauser, MLIS, enjoyed exploring the current state of cognitive informatics and neuroscience while collaborating on this article. Besides working in academic librarianship Cheri has been employed as a university lecturer, museum cataloguer and moving image archivist. Other research interests include: the role of the library in accreditation and web-based indexing vs paper indexing.

Shan Jin, MLIS, CRM, CIP, is a Records Analyst/Archivist at Queen’s University Archive. She earned a master degree of library and information studies from Dalhousie University, is a Certified Records Manager and a Certified Information Professional. She has contributed to several ARMA technical reports. Shan can be contacted at jins@queensu.ca.

Bruce Miller, IGP, MBA is President of RIMtech, a vendor-neutral records technology consulting firm. He is an author, an educator, and the inventor of modern electronic recordkeeping software. The author of “Managing Records in Microsoft SharePoint”, he specializes in the deployment of Electronic Document and Records Management Systems.

With a twenty-two year career in Local Government IT, Kris Boutilier has overseen numerous reinventions of technology. From DOS 3.2 to Windows 10, 300 baud dial-up to 100Mbit broadband, the IBM Selectric to Office 365. Transitioning from Physical to Electronic Records Management has proved the most challenging undertaking yet. Contact Kris at Kris.Boutilier@scrd.ca.

Anne Rathbone, CRM, has 20 years RIM experience, all with local governments. She was one of the leaders on the shared drive project, provides all staff training on the new shared drive and is responsible for maintaining the integrity of the new framework. She echos Kris’ sentiments about e-records. Contact Anne at Anne.Rathbone@scrd.ca.

Sagesse: Journal of Canadian Records and Information Management an ARMA Canada Publication Winter, 2017 Volume II, Issue I

Introduction

Welcome to our second issue of Sagesse: Journal of Canadian Records and Information Management and an ARMA Canada publication!

In March 2016, ARMA Canada launched its first issue of this publication under the working title of Canadian RIM, an ARMA Canada Publication. At the same time we announced a contest to get suggestions for a title from the ARMA Canada membership that focused on Canadian records and information management and information governance. Deidre Brocklehurst, from Surrey, British Columbia, suggested Sagesse: Journal of Canadian Records and Information Management which was the top choice for the Canadian Content Committee, which is now called Sagesse’s Editorial Review committee (the committee) and we congratulate Deidre for such an appropriate title.

Sagesse (pronounced “sa-jess”) is a French word meaning wisdom, good sense, foresight and sagacity which is most appropriate for the mandate of ARMA Canada’s publication. It embodies Canada’s unique heritage as well as instilling knowledge, wisdom and common sense.

Sagesse’s Issue I in 2017 features the following articles:

- “Memory as a Records Management System,” by Sandra Dunkin and Cheri Rauser highlights how our brains process, organize and retrieve information through memory and recall patterns and garner that into a records management system. This is indeed a unique approach to records management.

- Shan Jin presents an interesting and thorough discussion on records management in Canadian universities in her article entitled, “Records Management in Canadian Universities: the Present and the Future.” She provides a comprehensive view on current records management practices in Canadian

- In “From Promise to Fulfillment: The 30-Year Journey of Electronic Recordkeeping Technology,” Bruce Miller shares his intriguing personal journey in the development of electronic recordkeeping software technology. This article is also translated into French.

- And, Anne Rathbone and Kris Boutilier provide a case study on the Sunshine Coast Regional District in British Columbia and their enticing and ambitious undertaking of restructuring a shared drive used by all employees in their article, “From Chaos to order – A Case Study in Restructuring a Shared Drive.”

We’d also like you to be aware that there is a disclaimer at the end of this Introduction which notes that the opinions expressed by the authors are not the opinions of ARMA Canada or the committee. If after reading any of the papers you find that you are in agreement or have other thoughts about the content, we would certainly like to hear them and urge you to share with us. We’ll try to publish your reflections in our next Issue. And if you have any recommendations about our publication please share these as well. Opinions and comments should be forwarded to: armacanadacancondirector@gmail.com.

What goes into putting this type of publication together? First of all, we need you and your RIM-IG experiences! Then, Sagesse’s volunteer Editorial Review committee is on hand to assist you. We have received some amazingly unique articles for publication and we applaud our authors for their dedication to our Canadian industry.

It takes time to prepare each edition, from the point when we approach authors to when we actually are able to publish an article. Information about the types of articles we are interested in and the process through which the articles go is available on ARMA Canada’s website – www.armacanada.org – see Sagesse.

One other item I would like to draw your attention to is our shamelessly promoting a session at ARMA Canada’s upcoming conference in Toronto, ON, in 2017. Two of Sagesse’s Editorial Review committee members, Christine Ardern and John Bolton, will deliver a presentation on writing for Sagesse – something we encourage each of you to pursue.

Enjoy!

ARMA Canada’s Sagesse’s Editorial Review Committee:

Christine Ardern, CRM, FAI John Bolton

Alexandra (Sandie) Bradley, CRM, FAI

Uta Fox, CRM, Director of Canadian Content Stuart Rennie

DISCLAIMER

The contents of material published on the ARMA Canada website are for general information purposes only and are not intended to provide legal advice or opinion of any kind. The contents of this publication should not be relied upon. The contents of this publication should not be seen as a substitute for obtaining competent legal counsel or advice or other professional advice. If legal advice or counsel or other professional advice is required, the services of a competent professional person should be sought.

While ARMA Canada has made reasonable efforts to ensure that the contents of this publication are accurate, ARMA Canada does not warrant or guarantee the accuracy, currency or completeness of the contents of this publication. Opinions of authors of material published on the ARMA Canada website are not an endorsement by ARMA Canada or ARMA International and do not necessarily reflect the opinion or policy of ARMA Canada or ARMA International.

ARMA Canada expressly disclaims all representations, warranties, conditions and endorsements. In no event shall ARMA Canada, its directors, agents, consultants or employees be liable for any loss, damages or costs whatsoever, including (without limiting the generality of the foregoing) any direct, indirect, punitive, special, exemplary or consequential damages arising from, or in connection to, any use of any of the contents of this publication.

Material published on the ARMA Canada website may contain links to other websites. These links to other websites are not under the control of ARMA Canada and are merely provided solely for the convenience of users. ARMA Canada assumes no responsibility or guarantee for the accuracy or legality of material published on these other websites. ARMA Canada does not endorse these other websites or the material published there.

Authors’ Forward to Memory as a Records Management System

This paper was originally written in 2000 as a required assignment for the University of British Columbia’s (UBC) School of Library, Archival and Information Studies’ (SLAIS) LIBR 516: Records Management course, taught by Alexandra (Sandie) Bradley. The paper was subsequently published in the ARMA Vancouver Chapter newsletter, VanARMA, Volume 35, Issue 7, February 2004 (see newsletter introduction below).

The authors thoroughly enjoyed the initial collaborative and creative process when first drafting this paper as students and were thrilled to be asked to revisit this topic for Sagesse. The original paper now seems charmingly naïve in parts and certainly dated as we reference ‘palm pilots’ and other outdated technology concepts and limitations of the year 2000. And yet much of the substance of our original thesis is still compelling over the intervening 16-year period of development.

In the years since the paper was first written and published, the authors have been influenced by the many changes occurring in the fields of library science and records management, neuroscience, technology research and development, the documented challenges to cultural biases around collective memory as well as the growth in our own understanding stemming from personal experience and increased professional knowledge as our careers progress. The authors have endeavoured, in this iteration, to update our understanding of these and other topics through the exploration of new research into neuroscience and jurisprudence, as well as including an expanded discussion of collective memory/oral traditions as a valid means of historical/cultural record keeping, with a particular focus on Canadian Aboriginal oral culture resulting from one of the authors’ professional employment experience.

The original paper remains almost in its entirety (with quotes referencing palm pilots and all), however, it has been gently restructured to fit within the context of our new research and expanded thesis development. The original introduction to the paper from the VanARMA publication has also been retained below as it is no longer available in print or online. It has been a joy to revisit this topic and review new research to expand our own understanding of this complex topic.

Sandra Dunkin & Cheri Rauser, Vancouver, BC September 2016

VanARMA Introduction to Memory

as a Records Management System, February 2004:

By: Sandra Dunkin, VanARMA Newsletter Editor

This month features an article on how the human brain processes, organizes, and retrieves information through memory and recall patterns. It outlines many of the basic memory functions the human brain is capable of and compares them, more than favourably, with modern technological devices designed to recreate those very processes artificially.

Many of us spend countless hours in front of our computers, structuring databases, creating classification and retrieval systems for records of all types on a variety of media. What if we could improve upon the document management systems we use daily by having them mimic our natural cognitive processes?

As technology speeds ahead with new, bigger and faster means of storage and retrieval of records, we have a unique opportunity, Muse-like, to inspire software designers, hardware engineers and other assorted computer geeks on how to create better utilities to manage these masses of information. We already have voice recognition capability, but what about making access points to data storage more flexible, more “human”?

The human brain has a long history of storage and retrieval processes with a plethora of access points that can be as humorous and surprising as they are effective. Databases and other software applications are the tools of our profession. Perhaps it is time to consider how we really want/need them to work for us.

Mnemosyne, one of the Titans of Greek mythology, Goddess of Memory and, by Zeus,

mother of the Muses. According to Mary Carruthers (1996),

memory was the most noble aspect of ancient and medieval rhetoric.

Oil painting by Dante Gabriel Rossetti, 1881. Collection of the Delaware Art Museum,

Wilmington. Gift of Samuel and Mary R. Bancroft.

Memory as a Records Management System

by Sandra Dunkin, MLIS, CRM, IGP & Cheri Rauser, MLIS

Introduction

In the last 15-20 years there has been an exponential increase in the use of mobile technology even though some twenty-first century luddites bemoan our embracing of such technology. Those of us who appreciate the conveniences and rely on it to do our jobs, want to understand how we can develop technology that draws on the human capacity to store and retrieve information using the human brain as a template for future records management systems.

Rather than sound a warning bell of dire consequences if we don’t halt our engagement with mobile technology, the authors’ intention via this work is to highlight and validate the intrinsic value in human brain based oral memory creation and its application in records and information management (RIM). While acknowledging the inherent danger of information overload, the authors will explore the potential of orality in records management endeavours: past, present and future. Further, to highlight the value and the connections between what humans have always done and are working to improve, while still utilizing modern technology, the authors will explore some methods in which oral traditional cultures encode records in memory and discuss some of the ancient functions of oral records managers.

The Brain and Records Management

An early response to the increase in mobile technology came from Kate Cambor (1999) who suggested that people were becoming overly reliant on an “accumulating external memory network” of aides-memoire in the form of computers, palm pilots and other storage and retrieval devices (2). Cambor maintained that increasing dependence on such externalised media had been to the detriment of training our neurological filing cabinets (aka memory) to perform their autonomic tasks of classifying and retrieving data. Jim Connelly, CRM (1995) had made similar warnings five years earlier: suggesting that the brain’s capacity for retrieving information is a “common” records management tool that is being largely forgotten and ignored (35).

The authors maintain that records managers and information professionals are in the position of assisting us in managing the information overload that can result from access to far greater amounts of information than ever before. The professional skills and techniques of this group can be harnessed to help mitigate that potential overload through accessing the human brain’s innate capacity to make sense of what appears at first to be nonsensical and disconnected. The authors will show how the technology of the human brain and the methods that humans have employed to develop oral systems to store information (corporate memory) can provide clues for modern records managers to design systems that enhance, rather than work against, the human brain’s capacity for logical storage and retrieval of information (Wang, 2003).

Records and information management (RIM) professionals could, in the near future, plan information systems that take into account the logical mental cues that enhance people’s memories and therefore their ability to retrieve information from both mental storage facilities and computerised storage systems. According to Jim Connelly (1995), information and records managers need to recognise that “memory is . . . the most common information retrieval software known to man [sic]” (35). Understanding how the human brain stores information could enhance our ability to anticipate how the brain strategically files or searches for data within a central records management system, in a library database, an online catalogue, in an encyclopaedia or on the internet.

But, in order to employ the logic of human-filed memory we must first understand how memory is encoded in the brain and the roles that oral memory systems have traditionally played in how individuals and, therefore, societies remember. To that end, the authors will review the concept of oral memory and oral traditions, as well as the science of the brain memory functionality. This context is essential in formulating theories for improvement of modern records management systems – storage, maintenance, retrieval and disposition. The tenacity and perseverance of oral records should inform the paradigm by which we approach modern RIM practise, especially in this age of Big Data and the proliferation of stored records.

Oral Traditions and the Written Record

Circa 2500 years ago, in the Phœdrus, Plato asserted that Socrates saw the development of alphabets as crutches that limited the capacity and usefulness of the brain as the central storage system for human knowledge. “Writing, far from assisting memory, implanted forgetfulness into our souls” (Plato 370 BC, 274c-276e, Translation by Fowler 1925, and Kelber 1995, 414). Using the written word during this time and in the context of aeons of aurally transmitted records management culture was rather like everyone keeping a personal copy in today’s automated office environment. Plato further asserts that Socrates believed that written words were antisocial because they segregated themselves from living discourse, suggesting that, much like painting, “writing maintains a solemn silence”; they stare at readers, telling them “just the same thing forever” (Plato 370 BC, 274c-276e, Translation by Fowler 1925, and Kelber 1995, 414).

In the early renaissance, the rise of Gutenburg’s printing press made the written record more accessible and led to the broad dissemination of various religious and political ideologies and propaganda that threatened the powerbase of the aristocratic rulers of Europe. Printing was initially viewed with suspicion and contempt as a means of disseminating unapproved and non-authoritative information, and printing in the renaissance period remained largely exclusive to the literate elite of society. The eventual democratization and broad distribution of written information over time has been largely beneficial to society, while at the same time diminishing the experiential aspect of dialogue and comprehension and contributing to the decline of oral memory records and oral history as true and credible accounts. The advent of writing may therefore be credited with the degradation of the value of the human memory in the management of authentic historical records.

So, just how credible and reliable of an authenticator is the written record? The written record is a subjective snapshot caught in time is static and serves to externalise individual and collective memory. If only one person’s written account of an event survives, that person’s perception becomes the permanent record, complete with that individual’s subjective biases and interpretation.

It must also be noted that the written record is often ephemeral in nature, subject to all varieties of destruction and disaster (fire, flood, political ideology, and disintegration of the materials on which it is recorded). And once destroyed, it is lost forever. Survival of early written records is, therefore, inconsistent and often a matter of chance.

In contrast, the oral record is a living entity and as such the collective oral memory is more reliable by right of common ownership within the entire community or culture. It is by essence collectively ‘authenticated’ and preserved. The oral tradition is derived from the community’s sense of what happened and what is important to preserve. “Memory, not textuality, was the centralizing authority” in cultures based on oral tradition (Kelber 1995, 417). The oral or memory record is passed on as a living entity that changes with new understanding and belief about the event, thereby reflecting the community’s, rather than the individual’s belief about the truth of the record. Rather than being subjective or revisionist, oral records reflect a composite of understanding that is enriched with time and interpretation.

Updating the information contained in oral records is simply a matter of updating your memory or belief about a certain event or idea. According to Ginette Paris (1990),

“The memory at work in oral cultures allows for modification and adjustment, sometimes reversing the meaning of an event. It’s an active memory, which breaks into consciousness through archetypes, dreams and myths, fantasies, symbols and artistic work. It selects and organizes the past, putting into context what is recollected” (121).

Once something is committed to writing, it often becomes the ‘official’ version of the event and it becomes the permanent record. The problem with this process is that the written record is by nature static and inflexible. It may be superseded by another version, whether the original version remains intact or is physically destroyed. So, rather than seeing the rewriting of history in a contemporary context as dangerous revisionism, we can see it as an attempt to recapture the experience of living oral history. The written record can and has been used as propaganda that may seriously alter our perception of past events, especially if only one subjective version is maintained.

For example, many of us view the words attributed to Elizabeth l in the speech at Tilbury of 1588 as an accurate and contemporary record, however the only surviving written account exists in a letter of Leonel Sharp in 1624. The existence of such a record at 36 years removed is concrete evidence of oral tradition at work and accepted into the corpus of so-called authentic written records. The irony of this example is that a culture that itself colonized and dismissed oral tradition has itself relied on orality to lay claim to instances of its own history and culture. Additional cross-cultural examples are found in complex religious belief systems where the devout accept as a matter of faith the accounts recorded, long after from oral tradition, are true renderings of the events. In certain contexts, the disdain for oral traditions can be equated with a cultural racism and the desire to dominate over ‘other’ cultures, providing justification for egregious exercises of power.

In contrast to the ancient world of Homer and other oral-tradition records managers such as the Greek – aoidsz, Anglo-Saxon – Scop, Irish – Poet-Ollam, Italian – Cantastorie, French – Jongleur, English – “Singer of Tales,” the modern world faced by information and records managers:

“is complicated and we are inundated with information as never before. So instead of straining our own frail memories, we arm ourselves with an array of elaborate aides-memoire; rolodexes, filofaxes, palm pilots and of course computers. Such aids have existed in one form or another throughout written culture, . . . their growing use is evidence that the locus of memory itself has left our individual, biological memories and is now part of an “accumulating external memory network” (Cambor 1999, 2).

Over the centuries, writing has alienated a large segment of the world’s population. Indeed, until the 20th century, the majority of the world’s population had been illiterate: their learning and knowledge had been based on oral traditions. It is a significant cultural loss that cross-culturally, with notable exceptions, we have lost the capacity to exercise our brains in more than the basic autonomic processes necessary to encode memory. Today, our capacity to remember and retrieve volumes of information is so diminished that we now seek artificial memory enhancement – evidenced in the growing use of ‘natural’ pharmaceuticals such as Gingko Biloba, and other forms of brain training (Lumosity, for example).

Because they operated in an oral tradition, ancient oral rhetoricians (poets, bards, statesmen etc.) had to train their memories by using devices such as alliteration, rhythm, rhyme, stock epithets and synonyms. Irish poet-ollams (several levels of expertise above a bard) spent at least 12 years of their lives in a poet’s apprenticeship, training and memorising the volumes of tales necessary to their trade (MacManus 1967, 179-180). Genealogical inventories and epic histories were two of the methods employed by oral record-keepers and rhetoricians to classify, store and retrieve information vital to their culture and to their professions, and practitioners were highly regarded and respected within their cultural base.

Elaborate genealogical charting such as those invented by the poet-ollams of pre- alphabetic literate Ireland, conveyed the familial and cultural history of a people through lineage as described in the epic Tàin Bó Cúaligne (The Cattle Raid of Cooley). Futuristic societies such as the Klingon Empire, invented by science fiction writer Gene Roddenberry, are based on the Anglo-Saxon culture that values lineage, heritage and honour above all else. And the Judeo-Christian Bible is replete with genealogical inventories covering hundreds of years of corporate memory. All of these inventories were oral in origin, lasting for hundreds if not thousands of years in that form before being written down.

Cultural history is therefore corporate memory and it is in danger of being lost to our over-reliance on media outside ourselves. Just as oral tradition was largely replaced with written records that may or may not be credible, external technology designed to remember tasks on our to-do lists, or to record long-term memory, is replacing even the simplest brain based records management tasks.

Children raised in cultures built on oral tradition access their cultural heritage through oral records stored in memory banks located in the memory of every member of that culture. Children raised in alphabetic cultures learn that the written word, or contemporaneously the televised image, is the path to self-knowledge and cultural comprehension – televised entertainment as the official record-keepers of our culture. And if you don’t have time, you can just record the information for later, contributing to a possible erosion in our ability to access our innate ability to use our brain to record information and to access when needed at a later date.

Human brain activated and recorded oral tradition/history preserves the corporate memory of families, societies and entire civilisations. Individuals brought up in an oral tradition are trained to process, store and retrieve large volumes of information by activating the enormous capacity of the human brain to organise, store and retrieve information in the form of memories. In oral traditional cultures, the brain’s capacity for memory enhancement, storage and retrieval has been used as the means of training each successive generation to remember their collective history and to preserve that culture’s vital records. Hence, archaeologists were able to find the city of Troy from the account of Homer’s Iliad, a collection of oral traditional stories spanning 8-10 centuries of Aegean cultural history: attesting to the veracity of oral record keeping in the absence of written records.

The Canadian Oral Tradition Context

The past disdain for oral veracity in record-keeping is notable in the history of the Canadian Federal and Provincial Justice systems with regard to Aboriginal oral history and oral traditions. However, there has been a subtle shift in the perception of collective memory and oral traditions in recent Canadian jurisprudence. This change reflects a reversion to previous generations’ respect for the orality of information and intellectual discourse. The emphasis on the written record as the primary authoritative record for corroboration of events and transactions is being challenged, with a shift away from the written authority on which western culture has based its educational, judicial and cultural institutions and practices.

The landscape is definitely changing, with such landmark decisions as Sparrow, Guerin, Delgmauukw and T’silhqot’in, in which recognition and respect for the collective memory has prevailed over the judicial requirement for documentary evidence referencing a pre-literate era in Aboriginal societies. Justice Vickers stated in his decision that:

Courts that have favoured written modes of transmission over oral accounts have been criticized for taking an ethnocentric view of the evidence. Certainly the early decisions in this area did little to foster Aboriginal litigants’ trust in the court’s ability to view the evidence from an Aboriginal perspective (Tsilhoqot’in v. British Columbia, 2007 BCSC 1700, para. 132).

And later,

Rejecting oral tradition evidence because of an absence of corroboration from outside sources would offend the directions of the Supreme Court of Canada. Trial Judges are not to impose impossible burdens on Aboriginal claimants. The goal of reconciliation can only be achieved if oral tradition evidence is placed on an equal footing with historical documents (ibid, para. 152).

This recent development in Canadian jurisprudence also supports the respect for Aboriginal oral history and oral traditions, wherein the majority of early post-contact western society was largely also illiterate in the alphabetic sense. John Ralston Saul (2008) suggests that: “We all understand that in the eighteenth and nineteenth centuries most Aboriginals were illiterate; they could not read and write in European languages. But then neither could most francophone and anglophone Canadians. … our voting citizens were largely illiterate. Our democratic culture was therefore oral” (126).

In the case of Aboriginal record keeping, “ground-truthing became difficult if not impossible to accomplish because there may not have been Aboriginal individuals able to communicate with the authors of the contemporary record in either English or French” (Interview with Howard E. Grant, Executive Director, First Nations Summit Society, September 2016). According to Mr. Grant, context has also been a major impediment to understanding. For instance, the questions: ‘do you live here?’, and ‘are you from here?’, were often unclarified in the sense of the local (house) or broad (region/neighbourhood) context as understood in aboriginal culture.” Mr. Grant referenced as an example the case of BCCA 487, Docket CA0727336 regarding the Kitsilano reserve lands appropriated by Canada for the development of CP Rail services in Vancouver. There are countless known cases of land appropriation that may be linked to the cultural differences in defining what constitutes occupying and/or owning specific parcels of land. Add to that, cultural perceptions about ownership: an insistence that only historically recent paper records could denote ownership, whereas cultural memory, however lengthy, was not legitimate proof of either occupancy or ownership.

Another major impediment to understanding is the obvious loss of meaning in translation from western languages to Aboriginal ones and vice versa, and the subsequent misinterpretation of context within the translation between different cultural norms. The modern practice of anthropology, used in many legal proceedings through expert testimony is, in many cases, flawed due to its inherently western bias, usually requiring some form of independent corroboration of the oral tradition evidence. Essentially the corroboration of generations of oral record-keepers acting collectively and collaboratively was not perceived as equal to the written record.

Transfer of knowledge in Pacific Coast Aboriginal cultures was once achieved through observation and social interaction within the home and the community wherein the next generations would ‘absorb’ and learn the cultural heritage and complex government systems of their individual tribe (Interview with Howard E. Grant, October 2016). Further, the Aboriginal oral traditions do not allow for ‘shortcuts’ when their content is shared with subsequent generations: as a result, when Potlaches are held, oral traditions are strictly maintained without deviation (ibid.).

With the advent of western cultures in North America, the ‘Si-yém’ (a Coast Salish term, meaning “respected one(s)”, denoting wisdom, knowledge and experience of the individual(s) so named), understood that change was inevitable and they recognised that the younger population must become educated and acquire the tools and training necessary to replace oral record keeping. This was the beginning of a transition away from dependence on oral records in favour of written ones, however, it is still understood that careful recording is essential to protect their culture and history from misinterpretation by non-aboriginal observers (ibid.).

At present, most aboriginal communities are not necessarily seeking to re-establish an oral culture, rather they are striving to maintain their collective memory as it may be required/useful in litigation, as well as maintaining their unique cultural and historical context. They are also seeking validation of their oral traditions insofar as they constitute the pre-contact/pre-literate record of their culture, there really isn’t a past or present divide – the oral record exists in a continuum.

Reliance on the written record in legal matters is also relatively recent in the long history of civilization, being enshrined in evidence legislation as recently as the late 19th century (Canada Evidence Act 1893). In judicial systems, the slavish reliance on written records as corroboration often neglects the fact that written records can misrepresent or lie on matters of historical fact to benefit one party over the other. Certainly in Canada the necessity for written documentation is at odds with historical practice given the contemporary multicultural nature of the emerging pre-twentieth century Canadian populace and the need “relate to power mainly through the oral” (Saul 2009, 127).

Memory Systems: Storage

If the “basic characteristic of the human brain is information processing”, (Wang 2003) then those ancient [e.g. Celtic and Homeric Greek] societies and modern oral-traditional- based cultures such as Canadian First Nations, developed sophisticated oral recordkeeping systems by building on the human brain’s natural capacity. They were not anomalies, inventing for the sake of necessity and were certainly not primitive precursors to the written and electronic record-keeping norms of modern culture. Cultural practices that enhance memory storage, such as storytelling, singing and mnemonics encouraged the development of the human brain to act as the receptacle of both individual and corporate cultural memory.

Current brain research informs our understanding of just how successful the human brain is as a storage and retrieval tool. Some studies in cognitive informatics suggest that the human brain is the most viable model for future generation computer and information retrieval systems that do not employ the brain-as-container metaphor: positing that memory is stored and retrieved in a relational manner (Carr 2010: Wang 2003). It seems that there is a “tremendous quantitative gap between . . . natural and machine intelligence”, with the gap favouring human brain capacities (Wang 2003). As a system, the human brain already discards, stores and retrieves information in a manner that is “more powerful, flexible and efficient than any computer system” (Wang 2003). This is consistent with oral record keeping that allowed for the revision of the story based on new information concerning the corporate cultural memory. This was considered best practice in oral record-keeping.

You have to begin to lose your memory, if only in bits and pieces, to realize that memory is what makes our lives. Life without a memory is no life at all, just as an intelligence without the possibility of expression is not really an intelligence. Our memory is our coherence, our reason, our feeling, even our action. Without it, we are nothing.

~Luis Beñel

Simply put, the human brain’s neural pathways encode memories. Every time the brain gets new information it compares it to old information and forms new connections. (Arenofsky 2001: Wang 2003). If our brains were really just containers, all the information we start acquiring as babies, would overflow a rapidly depleting capacity and we would have no more room. The human brain would be a storage facility with no structure or capacity for retrieval. We would all be serious hoarders with limited ability to make sense or use of the memories we had acquired and stored and our users would be tripping over the boxes in the hallway, filled with content that had no discernable relationship. Instead, we are able to use our brains to organize in a relational fashion and thereby make assessable to us the content that we need to make sense of our world and our lives. Just like any good data management system should.

But how does the human brain do what it does so well? How do our brains function and allow the development of such sophisticated oral recordkeeping? What part of the brain is responsible for memory formation and why are memories so important to human creative capacity and technological endeavours? What are the implications for future systems development?

Memory formation and storage is a multi-step and layered activity that takes place in the deeper structures and functions of the brain, most importantly the hippocampus that saves short-term episodic memory and prepares them for long-term storage and the neo- cortex, the long-term storage facility (Hsieh 2012). While there are several memory systems, each serving a different purpose (Bendall 2003), there are two structures coordinating multiple activities between the long-term and short-term memory systems. Within the frontal cortex is the short-term or working memory system, storing new information, while keeping us actively conscious of what is being learned and saved. Within this short-term system is the phonological loop (e.g. silent talking to oneself or learning new words), and the visuospatial sketchpad that makes it possible to manipulate images in our minds. And finally, the central executive system keeps us aware of those short-term memories and coordinates both the sketchpad and the loop. The neo-cortex is, in evolutionary terms, a relatively recent addition to our genetics (Rakic 2009). “If any organ of our [human] body should be [seen as] substantially different from any other species, it is the cerebral neocortex, the center of extraordinary human cognitive abilities.” (Rakic, 724). It is the crux of our creativity and the uniquely human biological innovation that allows us to develop long-term memory storage systems that rival modern electronic records management systems.

We know from studying the development of human infants and children, that experience is crucial to the formation of memory. If you hear something just once the neurons often do not release enough chemicals to make a lasting impression on the formation of neural pathways or on memory retention. But if you associate, for example, a telephone number with a visual image such as a person or a place, or the warm chest of your parent with feeling safe and content, then there is more neuronal activity. The more neuronal activity there is, and the more experiences you have to stimulate that activity, the more likely it is that deep memories will be stored in the long-term memory systems of the neo-cortex (Hermann 1993 and Bendall 2003). Episodic memory (experiences) that may be recalled and played over in the mind is a crucial step in the transfer of that recent memory into long-term memory storage.

The hippocampus has been heavily studied concerning its importance to the formation of long-term memory. “A sea-horse-shaped region tucked deep in the folds of the temporal lobe above the ear” (Carmichael 2004, 50), the hippocampus stores recent episodic memories. Those memories are then rehearsed in our minds and eventually stored as long-term memory (probably during sleep) within the neo-cortex (the outer layer of the brain). It is the repetition and recollection that stimulate the hippocampus to start the process of creating long-term memories that can be later accessed. Children that request the same bedtime stories about the events of their day, over and over, are in effect committing to memory a synopsis of the day’s activities in a process of encoding their family history and committing to memory the deeds of the collective. Watching television or videos is generally a non-experiential activity, therefore the accompanying neuronal activity is much less than reading a book, playing in the sandbox, doing a puzzle or telling stories out loud. The type of activity will determine the degree of information retention in the hippocampus, further initiating the transfer of that experience into memory and determining what is eventually available to the person as accessible knowledge in the form of memories.

Your memory is a monster; you forget—it doesn’t. It simply files things away. It keeps things for you, or hides things from you—and summons them to your recall with a will of its own. You think you have a memory; but it has you!

~John Irving, A Prayer for Owen Meany, 1989

It is now understood that babies are born without the significant neural pathway development associated with the adult brain. The information and experience children are exposed to will create the neural pathways or synaptic connections in their brains, or, not create them in the case of those who are neglected or abused as youngsters. Since children are not born literate, in the alphabetic sense, they spend these crucial few years as learners in a primarily aurally receptive environment, completely dependent on other people to define and determine the nature of their experiences and therefore the type of memory that will be stored in the neo-cortex. Children’s preferred method of exploring the world and learning is through play, not through rote learning or blanket memorization. Neural pathway development and later ability to store and retrieve information in the adult brain is dependent on children being exposed to a wide variety of repetitive and fun activities that stimulate neuronal activity in the brain and cause the creation of neural pathways.

This is not to say that only children have the capacity to form significant new pathways in their neural networks or that later in life memory formation and big learning are outside the capacity of the adult human brain. In the past it was theorized and held as true that once you were past the baby stage, the brain became fixed, with little capacity to learn new things or heal from trauma. Scientists now know that “the brain is a “work-in – progress” (Arenofsky 2001), with a healthy brain exhibiting the capacity to learn, change, grow and create new pathways until our end date. The human brain is essentially scalable. Like a well-built records management system, the brain can accept new information and accommodate new systems, incorporating and working with them in ways that were originally not anticipated.

Memory Systems: Retrieval

The human brain makes connections to what it already knows and decides what to keep. No multiple versions of the same memory file are cluttering up our hard drives and getting us into trouble when we send our boss the wrong version. But, we know that it’s easier to forget information that is new, different or that we don’t care about. We rank memories according to their relevance to us and that relevance is determined by how much we care. People can put to memory large amounts of information to do well on an exam and then forget it all in a short period of time or over the summer break. Even those with so-called eidetic or photographic memory must care or find relevant the information put to memory or they will not be able to retain it. If one had true eidetic memory they would not be able to prioritize or find meaning in the memories acquired and it would be a huge data dump with no retrieval system to help mediate between the brain and the information (Schmickle 2010). We are instead the inheritors of a very sophisticated “command center” the size of a grapefruit, exhibiting extraordinary powers for storage, retrieval, relevance ranking and forgetting (aka culling, pruning, throwing away) (Arenofsky 2001).

How different is the human brain memory retrieval system from a computer? Is human memory similar to the RAM in a personal computer? (Freed 1997, 1). According to Kate Cambor (1999), “Something memorized by a computer is not the same as something memorized by a human.” For memory systems that store the most stable long-term memories it is not known how much maintenance they require. But, it is unlikely that human memory systems need maintenance in the same way as computer RAM, which basically involves the maintenance of current through a circuit. “There is no bio-electric [sic] system running current through our brains,” rather the “electric nature of the neurosystem comes from interactions between individual neurons and the task of maintaining this electric system requires only keeping the cells alive and keeping them connected to their neighbouring cells” (Freed 1997). In effect, we maintain our brain’s records management system by exercising our capacity for storing and retrieving memories: by experiencing life through all of our senses and exercising our natural capacity to manage information. Of course human memory can be disrupted by accident or disease, but the innate capacity of the human brain to store memory is not in question here. Since the hippocampus is critical for normal memory function (Hsieh 2012), we now know that if we damage or develop disease in that deep brain memory making place, then we have compromised our capacity to create retrievable memories in most systems of the memory making areas of the brain (Bendall 2003).

Human memory creation and long-term storage is admittedly a sophisticated and complicated process: but how is it that we retrieve those memories once they are stored? Human memory incorporates a variety of ‘search fields’ in the form of associational cues, in order to retrieve that information. The brain cross-references those memories through a series of sensory triggers that index the relationships between our experience and; images, sounds, smells, emotions, colours, sensations, intonations that we associate in our memories with that experience. In order to function as an information management system, the brain has developed into a sophisticated structure that relies on retrieval schematics or cues such as mnemonic formulas (memory keys) designed to file and retrieve information/data in the form of memory. The neo-cortex, the records management system that houses those long–term memories functions as a “context- dependent rather than location-addressable memory system” (Marcus 2009).

The associational retrieval cues operating in the human brain are the ‘colour-coded labels’ that trigger retrieval of information from long-term memory storage. For instance, your grandmother’s stories from childhood might be inextricably linked to the cue of the scent of baking scones. The difference between human memory retrieval and computerised retrieval is that the brain always maintains the context of the record wherever it files it in our memory, regardless of how difficult it sometimes is to initiate recall. Hence, the taste of an exceptional wine enjoyed in the company of good friends on a beautiful day can never be repeated in exactly the same way and the smell of baking scones is forever linked to your grandmother.

Nothing is more memorable than a smell. One scent can be unexpected, momentary and fleeting, yet conjure up a childhood summer beside a lake in the mountains; another, a moonlit beach; a third, a family dinner of pot roast and sweet potatoes during a myrtle-mad August in a Midwestern town. Smells detonate softly in our memory like poignant land mines hidden under the weedy mass of years. Hit a tripwire of smell and memories explode all at once. A complex vision leaps out of the undergrowth.

~Diane Ackerman, A Natural History of the Senses

Memory records also differ from paper or electronic records in that the majority of memory records are not maintained or even delineated into a verbal format. All of our sensory organs are utilised in the creation of a memory record, and are rarely translated into a verbal or written record – human emotion is the prime example, most notably the sensation of fear. Language is an inadequate form in which to relay the complexity of most human perceptions, therefore the ‘hard copy’ or written form of human memory falls too far short of the actual experience.

The human brain is not homogenous as a records management system, but displays the attributes of flexibility and scalability. While the basic structures are the same for all of us there are differences in how memory retrieval systems work in the individual, either due to neurological differences or sometimes from trauma. Animal scientist Dr. Temple Grandin (2016) suggests that her autistic brain functions much like a search engine.

My brain is visually indexed. I’m basically totally visual. Everything in my mind works like a search engine set for the image function. And you type in the keyword and I get the pictures, and it comes up in an associational sort of way (video).

Grandin is not unique in being a visual thinker: as an autistic person she is an extreme example of that mode of memory retrieval. Like all humans, and despite being completely visual in her thinking, Grandin retrieves her memories in an associational fashion, not as items out of a storage box: but as contextual memory, in her case, in image form. This is what all humans do, whether visual, textual or auditory thinkers. Human memories are stored through the process of experience and association. We experience and then we associate other factors to that experience, further encoding it into our long-term memory system. Associations then become the cues that allow us to retrieve the memory after we have created it. How we retrieve it, what our preferred of default method is, visual, auditory or another sense is up to the unique wiring of our brains.

Oral rhetoricians have traditionally employed systems of ‘aides–memoire’ or ‘memoria technica’ as generalized codes to improve their all-round capacity to remember and as an aid in oral and later in written composition. Simple rhymes are commonplace in many cultures, especially ones that are meant to help children remember basic concepts: “I before E except after C”. Acronyms and acrostics tend to be confused into one in our contemporary usage, with acronyms especially becoming the language of corporations and government throughout the world: IBM, AWOL. Acrostics for remembering the musical scale and how to spell arithmetic: “Every good boy deserves fudge” and “a rat in the house might eat the ice cream” help us to remember not only the deliberately filed information, but also trigger the retrieval of childhood memories of piano lessons and math tutors. The classic block numeric classification scheme that is employed by librarians and records managers world-wide is actually a grouping mnemonic used to classify lists on the basis of some common characteristic(s). Mental imaging or peg is a method of linking words by creating a mental image of them, such as remembering a grocery list by having the items interact with one another in a bizarre fashion that stimulates short-term memory creation. ‘Loci et res’ as a mnemonic system, involves assigning things a place in a space and can be explored through the science of architectonics (Parker 1977). What they all have in common is how they stimulate the memory systems of the brain to store and allow later retrieval of the information in the form of memory.

While the human brain is capable of making sophisticated associations and computations of diverse philosophical, mathematical and logical construction, computers by way of comparison, can make only limited associations based on their programming. Standard records management retrieval systems are therefore limited in their points of access.

Paper files are usually linked to alpha-numeric storage and retrieval patterns and computer databases have a limited number of search fields which require long- term planning and programming to prevent redundancy before the stored information has completed its active life-cycle. For example, you may design a personnel database, which includes a Social Insurance Number search field, which may become problematic when privacy issues come to the fore in the field of records management. Human memory, on the other hand, is unlimited in its storage and retrieval patterns. It is elastic – a stored record can be retrieved by a wide variety of methods, some seemingly unrelated to the actual information, for example: ‘déjà vu’.

Right now I’m having amnesia and déjà vu at the same time. I think I’ve forgotten this before.

~Steven Wright

The human brain has built-in collocating functions, syndetic structure and some pretty awesome authority control. One might think that relational databases used to store information in a records management system, such as a library database or on the internet would handle information in much the same way as the human brain. However, unless the database is programmed to collocate the records upon retrieval and thereby avoid duplication, then the resulting list will resemble those from a search on the internet. Someone has to tell those little bots to collocate, before they collect.

What the classical mnemonists and modern practitioners are doing when they deliberately design systems for memory aid is to take the process that the human brain naturally goes through to create memory records or metadata and enhance it to an extremely sophisticated level of data storage and retrieval. Remember that hippocampus, dependent on repetition and association to get experience/information into long-term memory. The sensory perceptions (images, sounds, smells, emotions, colours and sensations) that are associated with the creation of memory records in the human brain, become access points for retrieval. The context (who, what, where, when and why) plus the content (data, information, experience) form the metadata of the records management system.

Context= friends, wine, conversation, food Content= debate on the divine nature of Christ

Memory Record/Metadata= names, faces, clothing, the lighting in the room, the paintings on the walls, the smell of wood-fire, the taste of the food

Human versus Computer

Despite its recent lack of ‘exercise’, the human memory facility is so well constructed and organised that not even Martha Stewart could improve it. While human memory is also susceptible to viruses such as Alzheimer’s and total system failure such as amnesia, it does not require constant upgrades. Even if the human brain alters through evolution, there are not the same problems with transferring of data between software programs and hardware incompatibility despite language, cultural differences and time: the essence of experience appears to be constant. Technology, however, is rapidly replaced, upgraded and elaborated upon and quite often does not allow for retrospective software compatibility or hardware rewiring. The human memory facility remains constant; you do not have to plug in any new bits of organic matter to make it go faster, better or more colourful. It is inexpensive, portable, space saving, efficient, dust-free and does not require a battery of info-technicians to help you when you have a glitch. Access to electronically stored information, on the other hand, can disappear with a pop and a fizz of a short circuit a virus, or malicious hack.

Human memory records are filed and retrieved through emotive sensations. They engage all of our senses, as we perceive the content of the message. Can you imagine a computer program giving you the feeling of immense contentment when you have retrieved that little bit of data? Because it is the experiential memory that supersedes the factual memory, when you are told a story, the environment has as much to do with the retention of the memory as the tale itself. Human emotive metadata, combined with modern records management storage and retrieval systems, could result in database retrieval systems that mimic the manner in which the human brain creates multi-layered retrieval cues, based on the senses and the emotions, later employed by the brain to retrieve the information.

It is evident that humans possess an incredibly sophisticated method of creating and storing memories that allows us to be creative thinkers and toolmakers. But, is it not a stretch to suggest that our brain’s capacity for such activities can seriously rival an electronic records management system or that it should be considered the template for future development activities in records and information management or in the field of computer science? Consider this:

In 1973, a Canadian psychologist called Lionel Standing showed volunteers a series of photographs of objects for about 5 seconds each. Three days later, the volunteers were shown paired photographs, one that they had seen before and the other new, and were asked to say which was familiar. Standing increased the number of photographs shown to each person to an astonishing 10,000 and still they managed to identify the ones they’d seen before, with very few mistakes. Although this experiment tested whether they recognised something put in front of them, which is much less challenging than recalling something without any external cue, the results suggest that some aspects of human memory are effectively limitless (Bendall 2003, 1).

Mechanistic storage and retrieval within a limited and compartmentalized human brain was the theoretical blueprint that underlay early computer science and that definition was used to theorize and make assumptions about brain development and capacity (Wang 2003). The recent theoretical shift in cognitive informatics towards developing technology based on the human brain with its infinite possibilities for storage and retrieval, has implications for our understanding of how humans organize information and retrieve it. Our current understanding of the human brain can and should influence how we design better systems that use our brain’s capacity for relational data management and relevance ranking. Essentially the human brain could be the model for a scalable solution to information storage and management.

The authors believe that respect for human memory oral records management systems can be restored through a more thorough understanding of the capacity of all humans to store and manage information. Through scientific understanding we can harness those capacities to enhance and design modern records management systems. We know that, at best computers can store a billion bits of information. Human memory is capable of storing one hundred trillion bits of information (Paris 1990, 121) and making up to 500 trillion possible connections among the neurons of the brain (Arenofsky 2001). As suggested by Cambor (1999), most of us are awed by stories of people like:

The Greek statesman Themistocles, who in the 5th Century BC, is said to have been able to call by name all 20,000 citizens of Athens… [Any computer] could accomplish such a task of ‘memorization’ “without even trying, but who would be impressed? Yet, if a person today did anything analogous, who wouldn’t be? (5)

Conclusion

Themistocles needn’t be seen as an anomaly in human brain capacity. Armed with a new understanding of how the human brain has and can be employed in oral records management systems, Themistocles can represent that which is largely forgotten or underutilized: the potential of the human brain trained to efficient and effective storage and retrieval.

A computerized database is impersonal in that you may not be the one to plug in the information, but you are the one to retrieve it. You are not necessarily a participant in the creation of the record that is to be retrieved. Databases of the future could allow the creator and the user to interact with one another in the creation of living records that are altered or enhanced with each retrieval or storage of new information. External storage and retrieval systems may benefit enormously from a more extensive examination of human memory with a view to developing systems that are more intuitive and responsive to natural human processes. Human memory is akin to data in five dimensions, layered with perceptions from each of the senses, creating a comprehensive and experiential understanding of the information. It is likely, with the acceleration of technology: that innovations that incorporate some of these factors will emerge in the near future. We already have artificial intelligence and virtual reality enhancements available in the marketplace. It is not such a grand step forward to envision a more comprehensive and experiential human interaction with data through technology.

Works Cited

Canada Evidence Act. R.S.C., 1985, C. C-5.

“Interview with Mr. Howard E. Grant.” Interview by Sandra M. Dunkin. Sept., and Nov. 2016.

Tsilhqot’in Nation v. British Columbia, 90-0913 BCSC 1700, 36 (2007).

Arenofsky, Janice. 2001. “Understanding how the Brain Works.” Current Health 1 24 (5): 6-11.

Bendall, Kate. 2003. “This is Your Life…” New Scientist 178 (2395): S4. Cambor, Kate. 1999. “Remember This.” The American Scholar (Autumn). Carmichael, Mary. 2004. “Medicine’s Next Level.” Newsweek 144 (23): 50. Carr, Nicholas G. 2010. The Shallows. New York: Norton.

Connelly, Jim. 1995. “Designing Records and Document Retrieval Systems.” Records Management Quarterly (April).

Connolly, John. 1996. “You must Remember This.” Sciences 36 (3): 2.

Freed, Michael. 1997. “Re: Is Human Memory Similar to the RAM in a PC?” Aerospace Human Factors, NASA Ames Research Center, last modified January 6, accessed September 6, 2016, http://www.madsci.org/posts/archives/1997- 03/852177186.Ns.r.html.

Gaidos, Susan. 2008. “Thanks for the Future Memories.” Science News 173 (19): 26-29.

Temple Grandin on Her Search Engine. 2016. Animated Video. Directed by David Gerlach. PBS Digital Studios: Blank on Blank.

Hermann, D. 1993. Improving Student Memory. Toronto: Hogrefe & Huber. Herodotus. The Histories, edited by Aubrey de Selincourt. 1976. New York: Penguin Books.

Homer. Translated by Robert Fitzgerald. 1963. The Odyssey. New York: Anchor Books.

Hsieh, Sharpley. 2012. “The Language of Emotions in Music.” Australasian Science 33 (9): 18-20.

Kelber, Werner. 1995. “Language, Memory, and Sense Perception in the Religious and Technological Culture of Antiquity and the Middle Ages.” The Albert Lord and Milman Parry Lecture for 1993-1994. Oral Tradition 10 (2): 409-45.

MacManus, Seumas. 1967. The Story of the Irish Race. New York: The Devin Adair Company.

Marcus, Gary. 2009. “Total Recall: The Woman Who Can’t Forget.” Wired 17 (4). https://www.wired.com/2009/03/ff-perfectmemory/

Parker, Rodney. 1977. “The Architectonics of Memory: On Built Form and Built Thought.” Leonardo 30 (2): 147.

Paris, Ginette. 1990. Pagan Grace: Dionysos, Hermes, and Goddess Memory in Daily Life. Dallas: Spring Publications.

Plato. translated by Harold N. Fowler. 1925. Phaedrus from Plato in Twelve Volumes, Vol. 9. London: William Heinemann Ltd. http://www.english.illinois.edu/-people-/faculty/debaron/482/482readings/phaedrus.html

Rakic, P. 2009. Evolution of the neocortex: Perspective from developmental biology. Nature Reviews. Neuroscience, 10(10), 724–735. http://doi.org/10.1038/nrn2719

Saul, John Ralston. 2008. A Fair Country: Telling Truths about Canada. Toronto: Viking Canada.

Schmickle, Sharon. 2010. “Why You Don’t Want the Dragon-Tattooed Lady’s Photographic Memory.” MinnPost.Com, July 8.

Wang, Yingxu. 2003. “Cognitive Informatics: A New Transdisciplinary Research Field.” Brain and Mind 4 (2): 115-127. doi:1025419826662.

Records Management in Canadian Universities: The Present and the Future

By Shan Jin, MLIS, CRM, CIP

Introduction

This article presents findings from in-depth interviews with twenty-six records managers, archivists and privacy officers who work in twenty-one Canadian universities. It provides a comprehensive view on current records management practices in Canadian universities. The main topics include program staffing, program placement, records retention schedules and classification schemes, physical records storage and destruction, university records centre, Electronic Document and Records Management Systems (EDRMS), training, outreach and marketing. It also examines the relationships between the records management program and internal stakeholders and identifies the needs for knowledge sharing and collaboration in the academic records management community in Canada.

Literature Review

In both Canada and the United States, modern records management started from the federal government. Records management, as a professional management discipline, has been established for more than sixty years (Langemo 2; Fox 1). However, only a small number of scholarly articles were written on records management programs in the higher education environment in North America and even fewer focus on Canadian universities.

From early days, university archival programs often assumed responsibility for records management (Saffady 204). Until recently, many universities’ records management functions still largely resided with the archivist (Zach and Peri 106). From 1990 to 2010, several studies on academic records management programs were conducted by researchers using surveys and interviews. Some were large-scale studies, such as Skemer and William’s 1990 survey on the state of records management whose findings were based on responses from 449 four-year colleges and universities in the United States. Twenty years later, Zach and Peri conducted updated research on college and university electronic records management programs in the United States. Their article presented findings from their 2005 online survey of 193 institutions and interviews in 2006 with 22 academic archivists as well as their 2009 online survey of 126 institutions. Although the focus of these two studies was not on Canadian universities, they provided some comparable data that are referenced in this article.

There were some small-scale studies which complemented the Zach and Peri research. Schina and Wells’ 2002 survey of fifteen American institutions and fifteen Canadian institutions provided relevant information from more than a decade ago, which is cited in the findings section of the article. Furthermore, there were two comparative studies that presented historical information on the records management programs in the University of British Columbia and Simon Fraser University (Brown, et al. 1-20; Külcü 85-107).

Higher education institutions have unique organizational structures and institutional cultures and traditions, which affect how records management programs operate within a university. Since there is a lack of comprehensive studies on records management programs in Canadian higher education institutions, this study will help to fill a research gap.

Research Scope and Methodology

Universities Canada (formerly known as the Association of Universities and Colleges of Canada) has ninety-seven member colleges and universities. Since it would be difficult to collect information from all of these universities over a short period of time, the author used a sampling method to decide the criteria for selecting participating universities for the study.

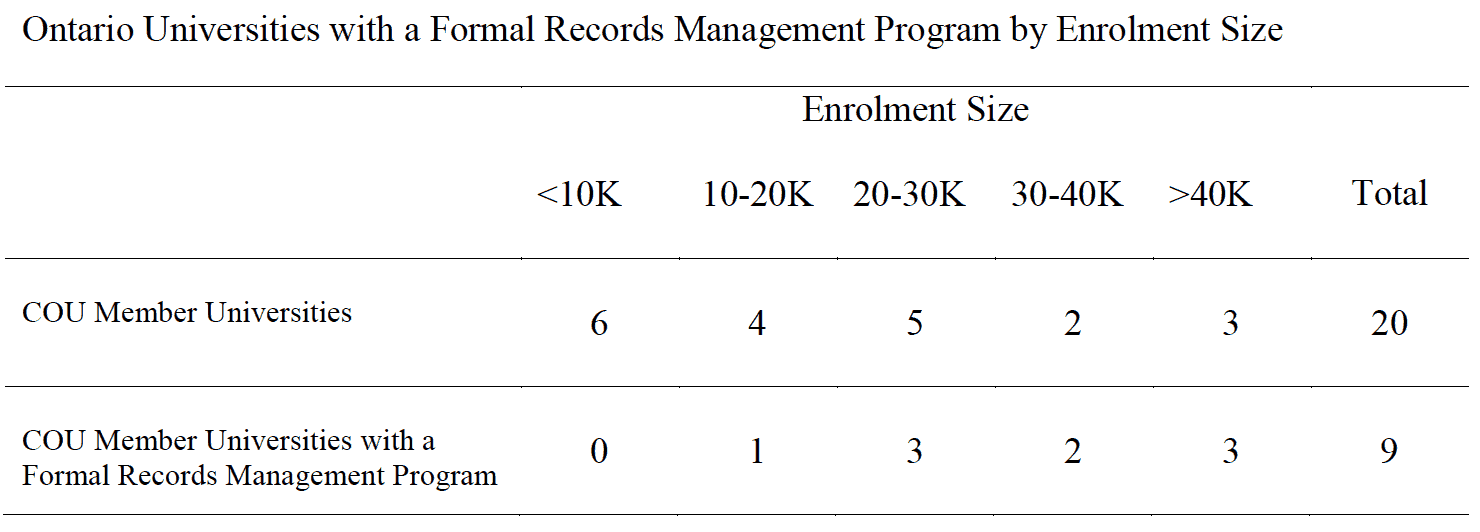

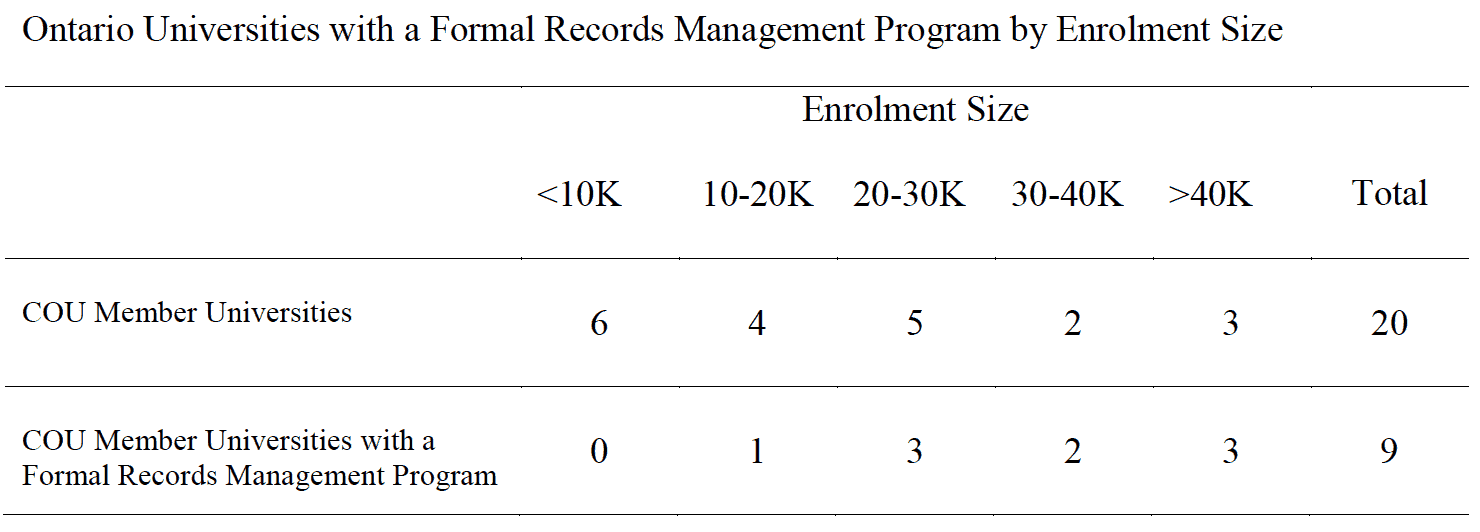

A quick email survey was sent to the records managers, archivists, or privacy officers of twenty Council of Ontario Universities (COU) members. The author asked these universities if they had a formal records management program with at least one employee who worked on records management for a minimum of fifty percent of his or her time. As demonstrated in the survey responses none of the small Ontario universities (with less than 10,000 students) had such a records management program (see table 1). Based on this finding, the author decided that eligible universities for this study would be those with an enrolment size of at least 10,000 students because those are more likely to have a formal records management program.

Table 1

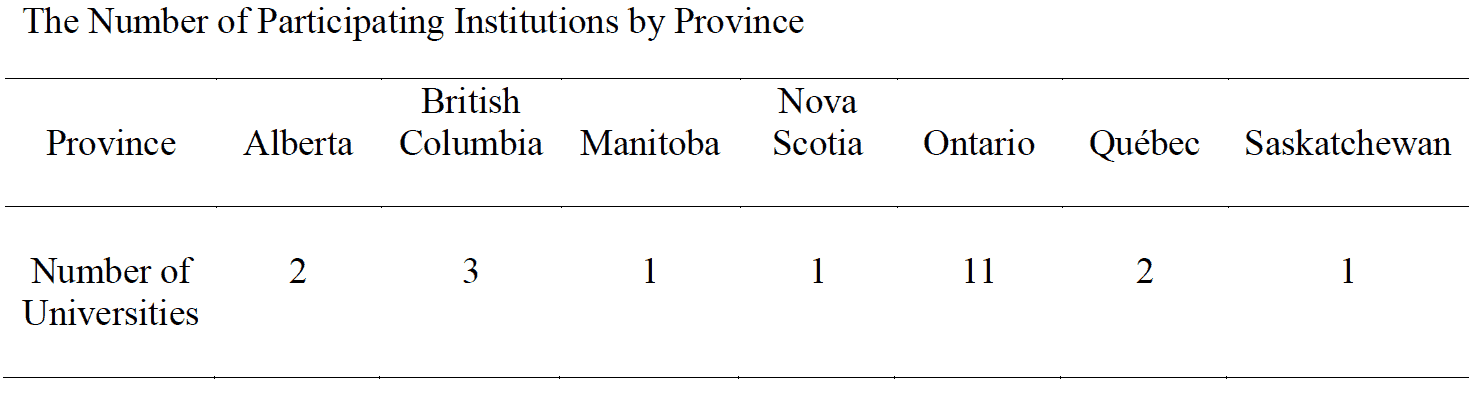

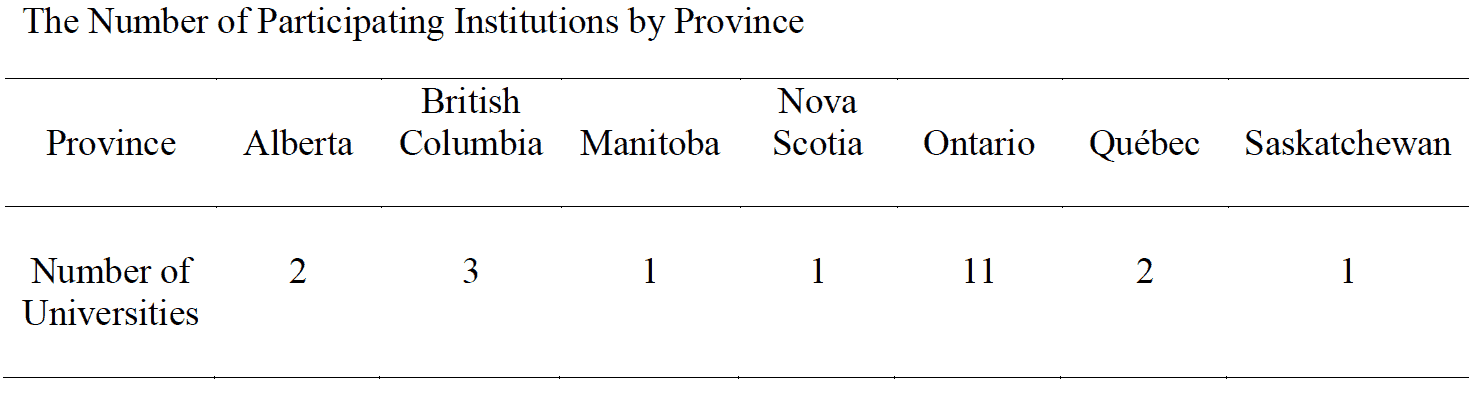

Due to limited resources for the study, the author chose to collect data using individual interviews instead of large-scale surveys. Between April 2015 and January 2016, thirty potential participants were contacted via email with a cover letter and a consent form and invited to participate in the study. Eventually, twenty-six records managers, archivists, and privacy officers from twenty-one publicly-assisted Canadian universities agreed to be interviewed. Table 2 lists the number of participating institutions by province.

Table 2

Upon receipt of the consent forms from participants, an in-depth 90-120 minute interview was scheduled with each participant. A questionnaire was sent to them ahead of the scheduled interview so they could prepare for it. Interviews were conducted with each participant in three ways: face to face, by telephone or using video conferencing technology. An audio recording was made with the permission of each participant. Eight site visits were also made during the same ten-month period. Additional information was gathered from email follow-ups and from the web sites of the participating institutions. To protect the anonymity of participants, findings of this study reflect group results and not information about specific individuals or universities, with the exception of publicly available information.

Findings and Common Concerns

Program Staffing

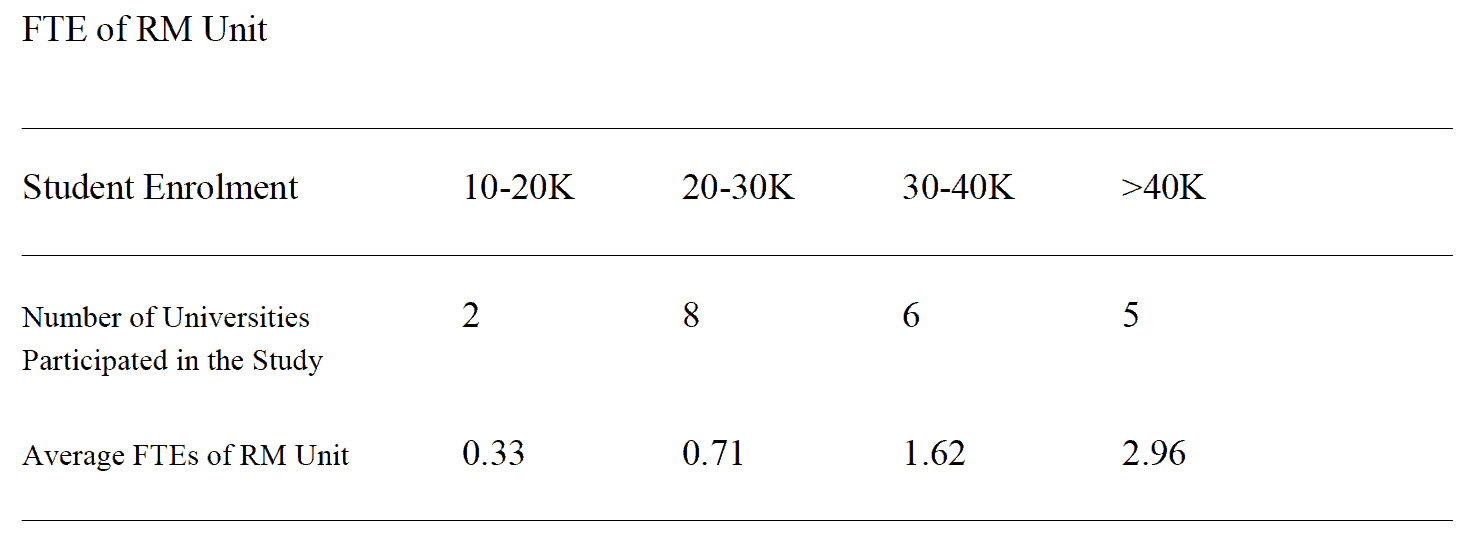

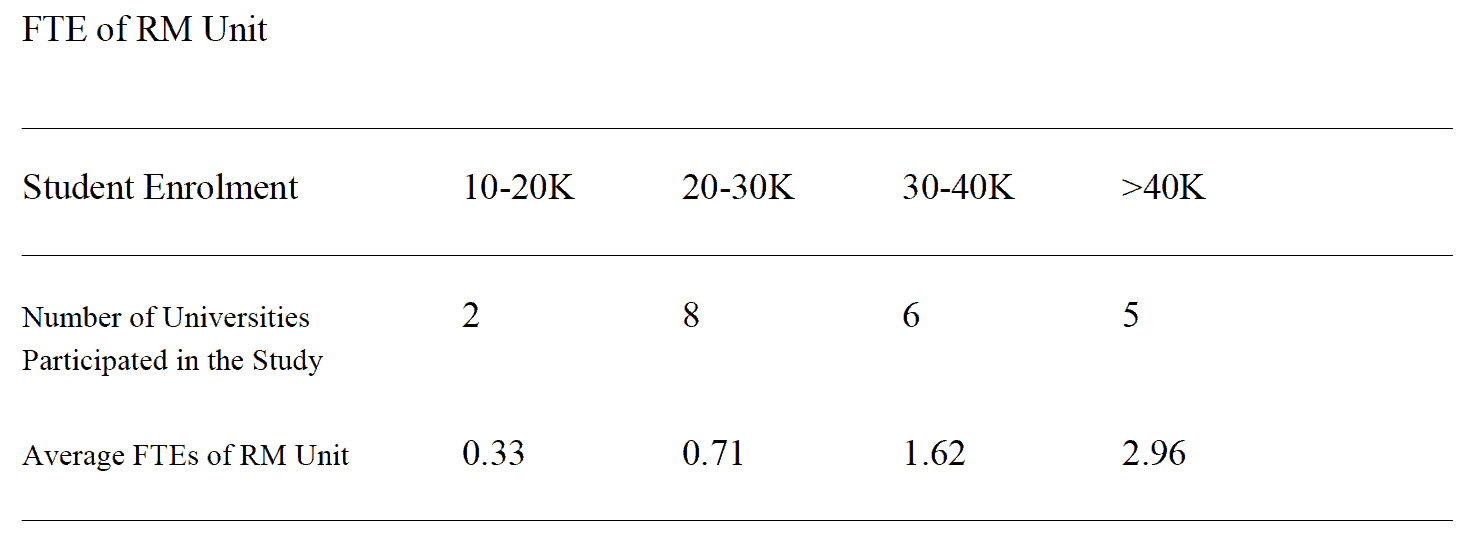

The study looked at the educational level of the persons responsible for the records management programs. Eighty-eight percent of the twenty-six participants have one or two master’s degrees in library and information studies, archival studies, or history. Thirty-eight percent of the twenty- six participants were hired or moved to their current records management related positions in the last three years. The data gathered from the interviews are listed in table 3. It is shown that the bigger the student enrolment size of the university, the higher the full time equivalent (FTE) number of its records management unit personnel.

Table 3

The author also asked the participants the percentage of their time that was devoted to records management related duties, the responses show that many of the participants of this study have responsibilities in areas other than records management. On average, they spend 67% of their time on records management.

Records Management Program Administrative Placement

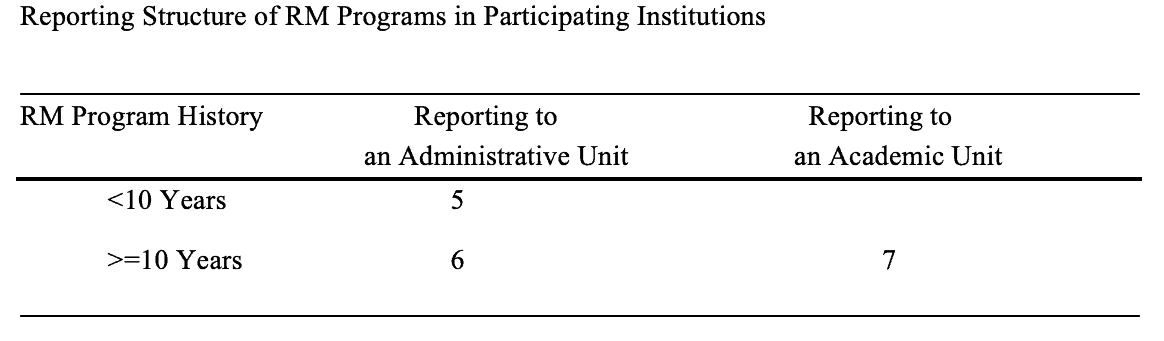

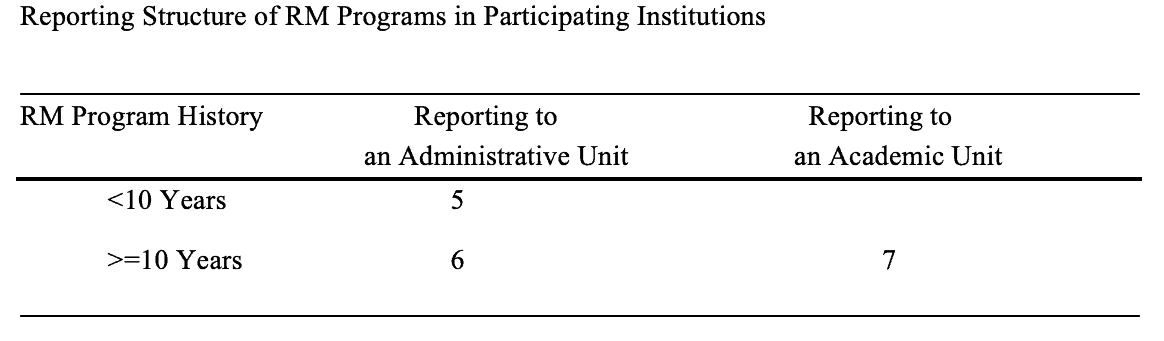

Unlike government agencies and private companies, Canadian universities often have a shared governance system. The academic side directly supports teaching, learning and research functions, and the non-academic side supports administrative functions. Early university records management programs often reported to university archives, an academic unit that is usually a part of the university libraries. Data collected from the interviews show records management programs established in the last decade are moving away from university archives and libraries, and report to a senior administrative department, such as University Secretariat and General Counsel.

Eighteen out of the twenty-one universities participated in this study each have a formal records management program. All five newer programs (<10years) report to an administrative unit.

Older records management programs (>=10 years) have a split, with six reporting to a senior administrative department, seven reporting to an academic department. In total, 61% of the eighteen records management programs report to a senior administrative unit, the rest report to an academic unit (see table 4).

Table 4

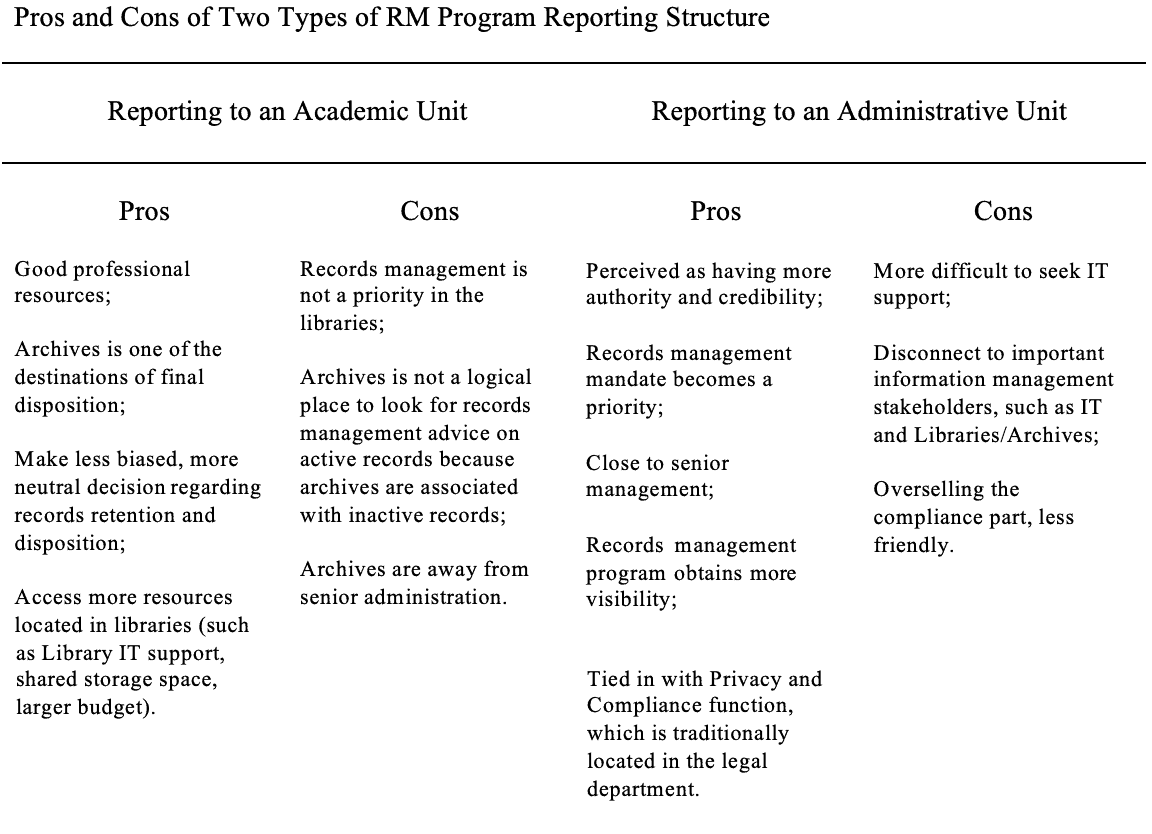

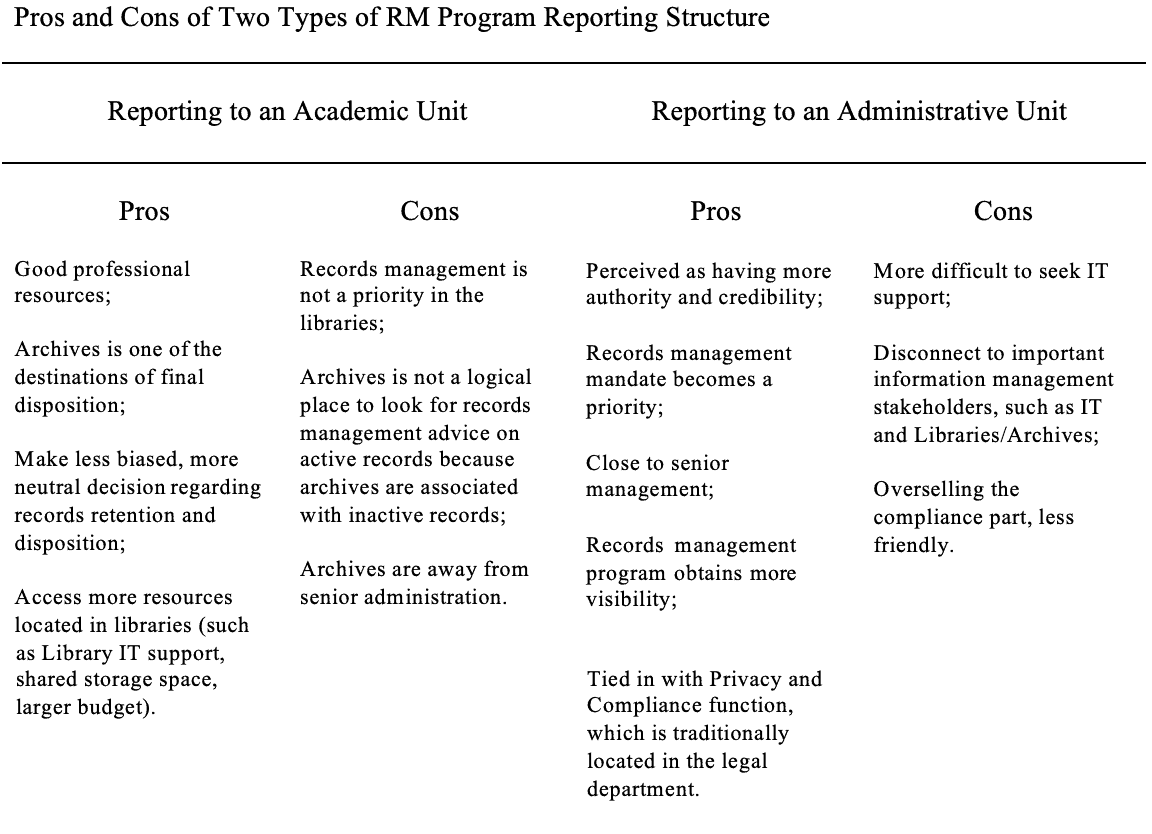

All participants of the study shared their thoughts on the pros and cons of both placements. As summarized in table 5, both reporting structures have their strengths and weaknesses. Archivist William Maher provided some interesting insights in a discussion on academic archives’ administrative location in his book – The Management of College and University Archives.

Maher pointed out there was “no single location that is best for all purposes” (23). He continued to say that too often “attention to the question of location is driven by dissatisfaction with limits imposed by the current parent department and the hope that some other parent would provide better support” (23). Although Maher was talking about archivists’ opinions on academic archives’ administrative location in the hierarchy of the college or university, it seems participants of this study have a similar mentality when it comes to the discussion of records management program’s organizational placement. Regardless of where the records management program is located, the author believes that records managers must capitalize on the advantages and overcome the disadvantages of its organizational structure in order to seek ways to improve records management services. It is important to align efforts from the records management program with other strategic partners such as archives, Information Technology (IT) security, legal department, privacy and compliance office, etc.

Table 5

Records Retention Schedule and Classification Schemes

Records retention schedules and classification schemes are the basic component of a sound records management program (Kunde 190). All participating universities that have a formal records management program have established a classification scheme. According to participants of this study, developing records schedules is an ongoing task. Common records schedules are a priority because these schedules are used by all university departments.

Records retention schedules drafting processes in Canadian universities are very similar from one university to the other, but final approval processes vary dramatically. Records schedules are:

- Approved by a University Records Management Committee;

- Signed off by a records director, or the president of the university, or a non-records management specific senior committee;

- Not formally approved by any group in the In Québec, the Archives Act requires that: every public body shall establish and keep up to date a retention schedule determining the periods of use and medium of retention of its active and semi-active documents and indicating which inactive documents are to be preserved permanently, and which are to be disposed of (3).

Also, the Act requires every public body to, “in accordance with the regulations, submit its retention schedule and every modification of the schedule to Bibliothèque et Archives Nationales for approval” (3). Such a process takes a long time; however, the biggest advantage is that the schedules become law. Going through the provincial government approval process gives the records schedules more validation, and compliance to schedules is mandatory in Québec.

In provinces outside Québec compliance to records retention schedules is the responsibility of individual offices and is voluntary. Based on the study findings university records managers often take on advisory or assistance roles. It is not their mandate to be the records management police, for instance, enforcing compliance to retention schedules at a departmental level, but university records managers can encourage compliance by:

- Defining roles for department/unit heads and staff in records management policy;

- Providing training and creating tools to assist employees with records management tasks;

- Using persuasion to encourage employees to use records retention schedules and classification schemes to manage records; and

- Setting up a departmental records management coordinators network for better communication.

Physical Records Storage and Destruction Services

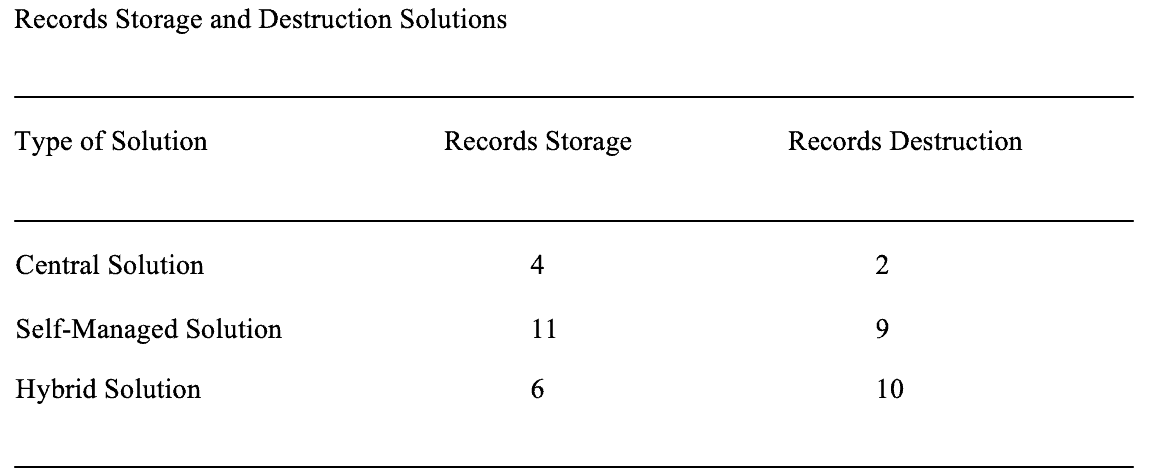

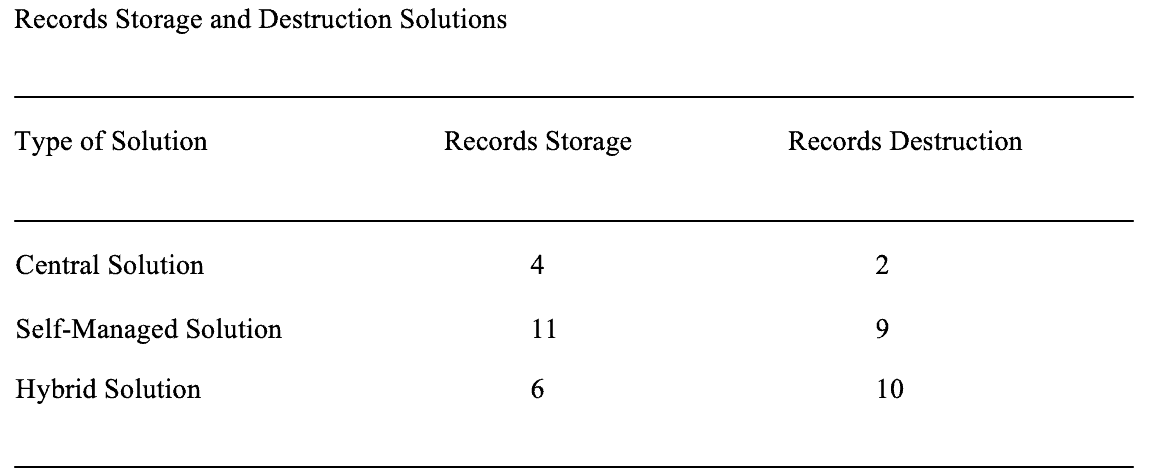

Canadian universities often have a decentralized budget model. When it comes to records storage and destruction, each department or unit is likely to adopt a self-managed solution, but some universities still make an effort to provide a central or a hybrid solution.

Data collected from the interviews indicate that:

- Four universities have set up a University Records Centre or use a commercial facility for records storage. All activities are monitored by the records management program;

- Six universities have a hybrid solution whereby departments and units can choose from using a centrally managed storage service or managing records on their own;

- Many universities use policies and preferred vendors to regulate records storage and destruction activities on campus;

- Fifteen out of the twenty-one universities (71%) have developed records destruction procedures to formalize records destruction activities;

- Fourteen out of the twenty-one universities (67%) have preferred shredding service provider;

- Only two universities have total control of records destruction on campus, and destruction activities are carried out through their University Records

Most of the records destruction activities are self-managed by departments (see table 6).

Table 6